Join Scalar Conference 2026 and prepare for two exciting days of learning about functional programming with a fantastic community!

Meet experienced IT experts, make friends with people who share your interests, and discover valuable insights. All Scalarians will tell you that making connections at Scalar is one of the best parts!

All of these while getting to know functional programming trends and use cases engaged community, and, of course, having extra fun at the epic after party. You really can't miss it!

Scalar Conf was fun. I learned new things, I met old friends, I made new acquaintances, and it felt good to be in the presence of such talented people. 10/10, will come again.

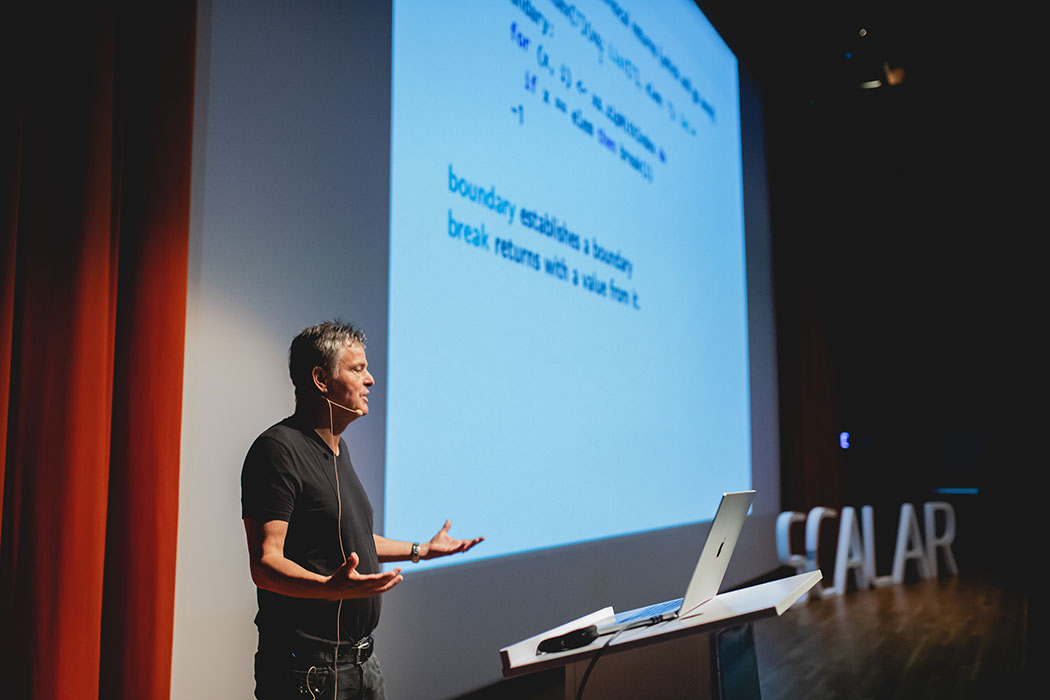

Renowned speakers

Hours of informative

Days of functional programming insights

After party to make friends

Drive engagement for your brand. Scalar is a platform that brings the Scala community closer together since 2014. Join us!

Become a Sponsor